Why most founder pricing is wrong

Most founder pricing decisions follow this process:

- Look at competitor prices.

- Average them.

- Subtract 20% to “be competitive.”

- Pick a round number.

This is not strategy. It’s matching. It leaves money on the table when you’re underpriced and chases buyers away when you’re priced for the wrong segment.

Real pricing validation produces a number with three properties:

- It maps to a defined buyer’s willingness to pay (you have evidence, not vibes).

- It clears your unit economics (you make money at this price, not just revenue).

- You can defend it under pushback (when a prospect says “that seems expensive,” you have a sentence that works).

This guide is the validation discipline that produces that number.

The four numbers

Every pricing decision rests on four numbers. Know all four before you set a price.

- 1Demand-side

SOM revenue at price X

Does the realistic-capture math clear your minimum viable business?

(SOM buyer count) × (1-5% conversion) × (price X) = year-1 SOM revenue. If this number doesn't cover your minimum business threshold, the price or the SOM is wrong.

- 2Voice-of-customer

Willingness-to-pay range from interviews

What did the buyer spend last time they had this problem?

20+ Mom Test interviews. Past-spend data beats hypothetical "would you pay" answers. The pattern in their spending bounds your acceptable price band.

- 3Quantitative

Van Westendorp band

Four pricing questions, plotted on a chart, define the band.

Survey 20-50 buyers with the four classic price-perception questions. The intersection points produce a Lower Bound, Upper Bound, Optimal Price Point, and Indifference Price Point.

- 4Unit economics

LTV/CAC at price X

Does the business actually scale at this price?

LTV (price × average customer lifetime) divided by CAC (S+M spend / new customers). LTV/CAC > 3 is the venture-scale threshold. Below means raise the price, lower CAC, or accept it as a lifestyle business.

1. SOM revenue at price X

How much revenue does your serviceable obtainable market produce if everyone in it converted at price X?

(SOM buyer count) × (realistic conversion rate, 1-5%) × (price X) = SOM revenue at X

If SOM revenue at your candidate price doesn’t clear your minimum viable business threshold, the price is wrong (or the SOM is). See market research for SOM math.

2. Willingness-to-pay range from interviews

What price range do real buyers describe as acceptable? Get this from 20+ interviews with the Mom Test discipline applied. The question that works: “When you last tried to solve this problem, what did you spend or budget?”

If the typical answer is “$10K/year on a competitor,” you can charge $100-300/month (with a path to $500+). If the typical answer is “nothing, I just deal with it,” you’re not validated. The problem isn’t painful enough.

3. Van Westendorp band

Run the Van Westendorp Price Sensitivity Meter on 20-50 buyers. The four-question survey produces:

- Lower bound (Point of Marginal Cheapness): below this, buyers doubt quality

- Upper bound (Point of Marginal Expensiveness): above this, buyers won’t even consider

- Optimal Price Point (OPP): where curves intersect, the price most buyers find acceptable

- Indifference Price Point (IPP): where “cheap” and “expensive” curves cross, the price where buyers feel neither over- nor underpaying

The Van Westendorp band is wider and more honest than any single number. Use it as a constraint: your price has to live inside this band.

4. Unit economics at price X

For SaaS, the question is: at price X, is LTV/CAC > 3?

- LTV (lifetime value) = price × average customer lifetime (in months)

- CAC (customer acquisition cost) = sales + marketing spend / new customers

If LTV/CAC isn’t 3+ at your candidate price, your business doesn’t scale. Either raise the price, lower CAC (better channels, better conversion), or accept that the business is a lifestyle business, not a scaling one. All three are valid. Knowing which you’re building is the discipline.

Test 3-5 prices, not 1

The most common pricing-test mistake is testing one price. A single-price test tells you whether THAT price works, not whether you’re leaving money on the table or chasing buyers away.

Test a 3-5 price spread, typically following a geometric progression:

| Tier | Price | Test purpose |

|---|---|---|

| Anchor low | $9/mo | Floor. Too cheap to take seriously? |

| Mass | $29/mo | Volume play |

| Premium | $79/mo | Where most SaaS lives |

| Enterprise lite | $199/mo | Test if buyers exist in your space |

| Enterprise | $499/mo | Sanity check |

Don’t ship all 5. The point of the test is to find the elasticity curve so you can pick 1-3 final tiers with confidence.

A common pattern in the data: conversion drops off a cliff between $29 and $79, suggesting your buyer pool segments at $50/month. You then launch a $29 tier (mass) and a $79 tier (premium) and skip the $9 tier (too cheap, attracts wrong customers) and the $199+ tiers (no buyer pool yet).

Pricing model matters more than price

Before obsessing over the number, validate the model.

Per-seat (Slack, Notion, Linear)

- Pros: scales naturally with customer; predictable

- Cons: friction at adoption. “I have to budget for the whole team”

- When to use: B2B where the buyer = the user (team productivity tools)

Per-usage (Stripe, AWS, OpenAI API)

- Pros: aligns price with value delivered; no waste

- Cons: hard to budget; sticker shock on heavy users

- When to use: when value scales with usage (infrastructure, API)

Flat / tiered (Basecamp, ConvertKit, Webflow)

- Pros: predictable billing; easy to understand

- Cons: leaves money on the table for power users; alienates light users

- When to use: when usage doesn’t vary much across customers

Freemium (Notion, Loom, Calendly)

- Pros: massive top-of-funnel, viral potential

- Cons: 95-97% of free users never convert; requires PLG mechanics

- When to use: network effects or virality; marginal cost near zero

Free trial (most SaaS)

- Pros: high conversion (10-30%); buyer sees value before paying

- Cons: requires fast time-to-value; trial expiration nudge mechanics

- When to use: default for most B2B SaaS

Pick the model based on your buyer’s purchase behavior and your unit economics. Get the model right; tune the number later.

The willingness-to-pay interview script

Five questions, in this order, with at least 15 target buyers:

- “When you last tried to solve [problem], what did you do?”

- “How much did it cost. In money, time, or attention?”

- “If I told you there was a tool that did X for $Y/month, what would your first reaction be?”

- “What would have to be true for you to pay $Y/month for this?”

- “How would you justify this expense to [boss / partner / yourself]?”

The signal isn’t the price number they give. It’s question 5. The justification model. If they can articulate the justification cleanly, they’re a real buyer at that price. If they can’t (“I dunno, it just seems like a lot”), they’re not.

Run 15-20 of these. Patterns emerge. The patterns name your price.

Common mistakes

1. Picking a price by averaging competitors. That’s matching, not strategy. Competitor pricing is one input among many.

2. Setting price once and never revisiting. Pricing is an ongoing decision, not a launch one. Annual review minimum.

3. Testing one price point. You learn nothing about elasticity. Test a spread.

4. Asking “how much would you pay?” Predicts nothing. Replace with past-spend and justification questions.

5. Skipping unit economics. A price that doesn’t clear LTV/CAC > 3 is a price you can’t scale at. Doesn’t matter how validated the willingness-to-pay is.

6. Free tier by default. Free tiers should be a deliberate choice, not a default. Most products that ship a free tier never recover from the conversion math.

What ShipFit does at this stage

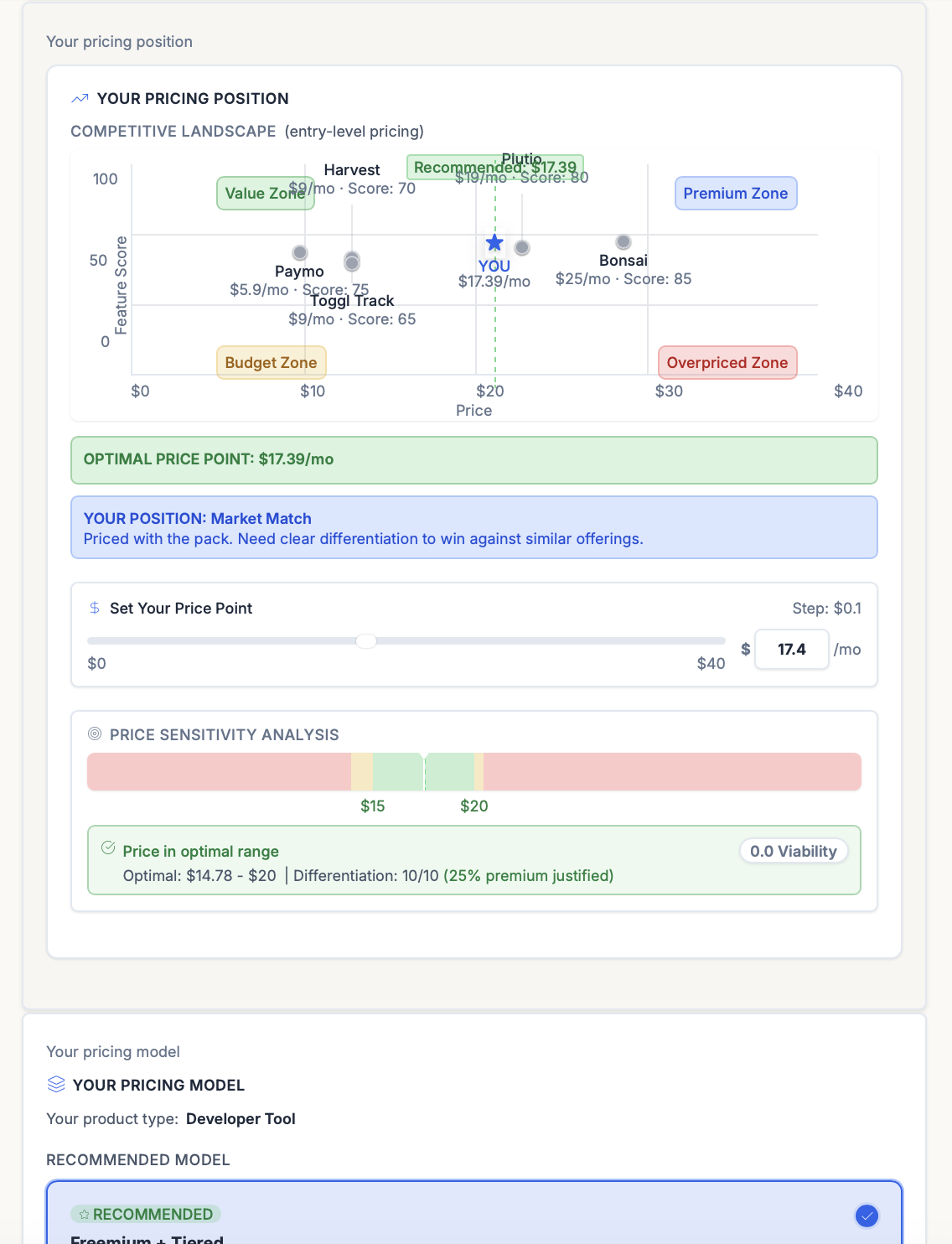

Stage 6 of the 9-step playbook is How to Charge?. Pricing validation. The output is your Pricing Position: where your candidate price sits in the competitive landscape, scored against four zones.

| Zone | What it means |

|---|---|

| Value Zone | You’re priced below most competitors but above the cheap end. Easy entry, room to raise. |

| Premium Zone | Above competitors. Defensible only if you have a clear differentiator the buyer recognizes. |

| Budget Zone | Cheapest in the market. Signals “not serious” to enterprise buyers. Volume play only. |

| Overpriced Zone | Above the Premium Zone with no matching differentiator. Buyers won’t even consider you. |

The output is a Pricing Position chart placing your candidate price against the Value / Premium / Budget / Overpriced zones, plus an Optimal Price Point recommendation drawn from the Van Westendorp curves.

The full Stage 6 output:

- Van Westendorp questions phrased for your buyer + product, with the four-curve diagram and the OPP / IPP / PMC / PME labels.

- A pricing position chart placing your candidate price against the competitor pack.

- A pricing model recommendation (per-seat, per-usage, tiered, freemium, etc.) keyed to your buyer profile.

- Tiering guidance with the “what a prospect will say” framing — the objection you should be ready to answer at the price you’ve chosen.

Stage 6 is the most-skipped stage in founder workflows. It’s also the highest-leverage one for revenue per customer. Don’t skip it.

The bottom line

Pricing isn’t a number; it’s a decision made up of four numbers (SOM revenue, WTP range, Van Westendorp band, unit economics) and one structural choice (model). Real pricing validation produces a price you can defend, a model that scales, and a unit-economics story that makes the business work. Founders who do this work charge 2-5x what their peers charge for similar products. That gap is mostly discipline, not market positioning.

Related frameworks

Van Westendorp Price Sensitivity Meter

The Van Westendorp framework uses 4 questions to surface a defensible price range for any product. Here's how to run it, interpret results, and avoid the cheapest mistakes.

Jobs to be Done (JTBD)

Jobs to be Done reframes every product decision: customers don't buy features, they hire products to get a job done. Here's how to apply it without faking it.

The Lean Startup

Eric Ries's Lean Startup, stripped of consultant fluff. Validated learning, Build-Measure-Learn, MVP, pivot or persevere. What it means and where it gets misapplied.

Frequently asked questions

What is SaaS pricing validation?

What is the Van Westendorp Price Sensitivity Meter?

How many price points should I test?

What's the difference between price and pricing model?

Should I offer a free tier?

How do I do willingness-to-pay interviews without leading questions?

When should I raise prices?

Keep exploring

The Van Westendorp framework uses 4 questions to surface a defensible price range for any product. Here's how to run it, interpret results, and avoid the cheapest mistakes.

The Mom Test is Rob Fitzpatrick's framework for customer interviews that generate real signal. Not praise. Three rules, applied step-by-step, with examples.

Most founders ship an MVP that's actually V1.3 with bugs. Real MVP scoping cuts ruthlessly until you can name the one hypothesis V1 proves, and ships a product that tests it.

Default-prompted AI is a slop machine: agreeable, plausible-sounding, useless for validating an idea. Here's how to use AI for the parts where it actually adds signal, and where to keep it out of the way.

Does each customer make you money? Or cost you money?

Run nine framework-backed decisions in order before writing code: define the buyer, prove the pain is painful, name the winning angle, scope V1 to the smallest test of the hypothesis, get behavioral evidence (paid pre-orders, signed letters of intent, or credit cards on file from a Fake Door Test), then ship. Most failed startups skipped at least three of those nine. Plan to spend two to four weeks on this. It saves six to nine months of building the wrong thing.

For indie hackers who've wasted months on dead ideas. ShipFit forces 9 decisions before you write a line of code. Proven frameworks, exports to Cursor.

If you want a conversation partner, Buildpad. If you want to stop researching and ship, ShipFit. Both solve different problems for different founders. Don't pick on hype.

The smallest version of a product that lets you test a falsifiable hypothesis about a buyer's behavior. Coined by Frank Robinson in 2001; popularized by Eric Ries in 'The Lean Startup' (2011). Not a stripped-down launch product. A learning tool.

Ready to make your next product a success?

9 decisions between your idea and a product worth building.