What “validate” actually means

The word “validate” gets used to mean two completely different things, and the gap between them is where founders lose 6-12 months of their life.

Definition A (what most founders think): Validation = “I told some smart people about my idea and they said it sounded good.”

Definition B (what actually works): Validation = “I generated behavioral evidence that real buyers will pay for this solution. The evidence cost them something. Money, time, or reputation. Not just words.”

Definition A is free. It’s also useless. People are polite, especially smart people, especially in a 1-on-1 conversation where you’ve already invested visible effort. They will encourage you. They will not pay for your product, but they will encourage you, and most founders mistake the encouragement for the prediction.

Definition B is what this guide covers. It’s harder to get. It’s also the only kind of validation that correlates with shipping a product anyone buys.

The four methods that actually produce signal

Listed in increasing order of evidence strength.

- 1Method 1 · Informational

Mom Test interviews

Past behaviour, not future hypotheticals.

10-15 buyer conversations focused on what they DID last time they had this problem. Signal: 3+ unrelated buyers describing the same pain in the same words.

- 2Method 2 · Light commitment

Fake Door Test

A landing page for a product that does not exist yet.

Drive 500-2,000 targeted visitors. Weight conversions by signal tier (Gold = credit card / Silver = demo booked / Bronze = time spent / Worthless = email signup). Anything above 1% Gold is strong evidence.

- 3Method 3 · Real commitment

Paid pre-orders

Money on the table for a product that does not exist.

3-5+ pre-orders from 30 buyer conversations is meaningful. 1 from 100 is a no. Stripe setup_intent or refundable deposits work.

- 4Method 4 · B2B only

Signed letters of intent

A signature from someone with budget authority.

Non-binding written commitment naming the buyer + the contract size + the price they would pay. The friction is the test.

Method 1. Mom Test interviews (informational)

The Mom Test framework by Rob Fitzpatrick (2013) is the foundation. The rule: never mention your idea. Ask about the buyer’s life, their past behavior, their current workarounds. The questions that produce signal are past-tense and specific:

- “Tell me about the last time you had to solve [problem X].”

- “What did you do? Walk me through it step by step.”

- “How much time did it take? How much did it cost?”

- “Who did you talk to about it?”

- “What did you try that didn’t work?”

What you’re looking for: at 10+ interviews, do three or more unrelated buyers describe the same pain in the same words? If yes, you have a real problem worth solving. If no, you have either the wrong buyer profile or the wrong problem framing.

What this method does NOT prove: that they will pay you. That’s the next two methods.

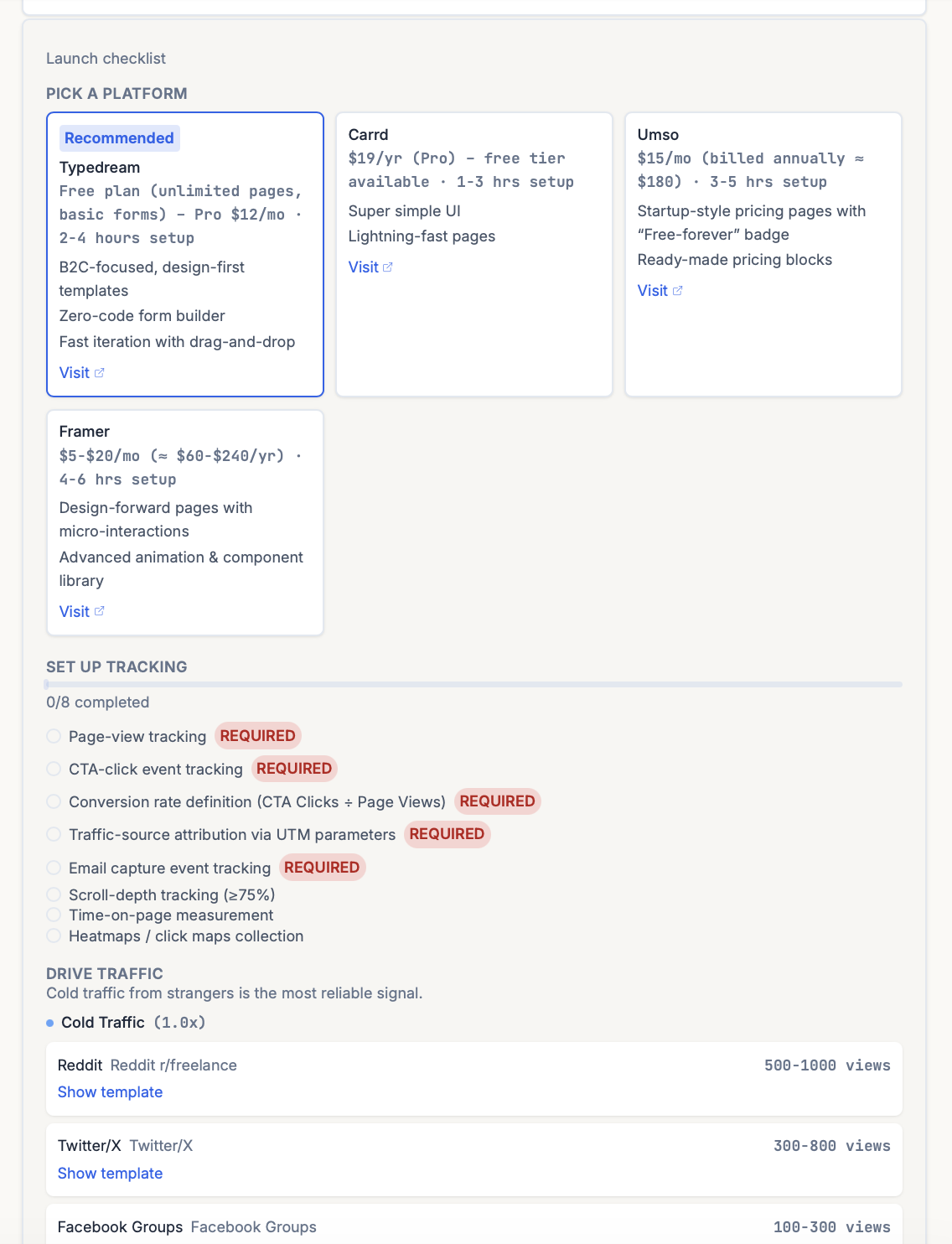

Method 2. Fake Door Test (light commitment)

A Fake Door Test is a landing page for a product that doesn’t exist yet. Drive real traffic to it. See who actually tries to buy. The actions visitors take tell you whether there’s real demand, or just polite interest.

The brutal truth: people lie about what they’ll buy. Only actions with friction count. Surveys, upvotes, and “I’d definitely use this” are noise.

Not all engagement is equal. Someone entering a credit card proves more than someone clicking “like.” So when you read the results, weight the signals by how much commitment each one took:

| Signal tier | What counts | Weight |

|---|---|---|

| 🥇 Gold | They tried to pay you. Credit card entered, deposit made, payment attempted. Pre-order with payment, deposit collected, annual plan purchased. | 100% |

| 🥈 Silver | Real commitment, no money yet. Booked a demo, filled a detailed form, gave a phone number, accepted a calendar invite. | 70% |

| 🥉 Bronze | Time + effort spent. Watched the full demo video, returned to the page 3+ times, read the long-form FAQ. | 30% |

| 🚫 Worthless | Email signup with one field. “Notify me when it launches.” Upvotes. Likes. Survey “yes I’d pay for this.” | 10% |

How to read the page:

- Drive ~500–2,000 targeted visitors from a single source (ad campaign, Twitter post, niche community post). Mixed-source traffic muddies the signal.

- Calculate a weighted conversion rate using the tiers above. A page that converts 3% to Gold is wildly stronger than one that converts 30% to Worthless.

- Anything above 1% Gold-tier conversion is a strong indicator. Below 0.5% Gold means people like the idea but won’t pay for it. Which is the same as “won’t buy” once it’s real.

The trap most founders fall into: counting Worthless-tier signal as validation. Email signups have weak correlation with revenue because the friction is too low. Anyone signs up for things. Almost nobody types in a credit card.

ShipFit’s Stage 7 (Will They Pay?) uses this exact tier system. The output is a Smoke test plan with the Gold / Silver / Bronze / Worthless signal weights laid out, so when you run the Fake Door test yourself you’re not counting Worthless-tier engagement as validation.

Method 3. Paid pre-orders (real commitment)

Ask buyers to pre-pay, even at a discount. This is the strongest signal short of an actual product launch.

The number that matters isn’t “how many pre-orders did you get.” It’s “what conversion rate from interested-buyer-conversation to pre-order did you achieve.” 3-5+ pre-orders from 30 conversations is meaningful. 1 pre-order from 100 conversations is a no.

Stripe makes this easy: take pre-order payments as setup_intent (no charge until launch), or use a “deposit” pattern (refundable amount that proves intent without committing the buyer to a launch date you might miss).

Method 4. Signed letters of intent (B2B only)

For B2B SaaS, an LOI is a non-binding written commitment from a buyer that, when product X exists at price Y, they will buy. It includes the buyer’s signature and contact info, and ideally specifies expected contract size.

LOIs are the gold standard for B2B validation because they require sign-off from someone with at least nominal budget authority. If you can’t get an LOI, you can’t get the eventual sale either. The friction is the test.

Realistic target: 3-5 signed LOIs from your target buyer profile before you build. If 10 enterprise sales conversations produce zero LOIs, your buyer profile, pricing, or value prop is wrong.

What doesn’t work (and why)

These methods feel like validation but produce no useful signal:

Surveys. Stated preference and revealed preference are different species. People say they’ll pay for things they’ll never pay for, and vice versa. Survey data has near-zero correlation with revenue. The only useful “survey” is the one Rahul Vohra developed at Superhuman (read more on the Superhuman PMF Engine). And that one is administered AFTER product launch to existing users, not pre-launch to strangers.

Friends and family interviews. Friends are polite. Family is more polite. They want you to succeed and will say what they think helps. If your validation set is your closest 6 founder friends, you have validated absolutely nothing. You’ve collected encouragement.

Counting upvotes / likes / followers. Vanity metrics. Eric Ries’s term in The Lean Startup (2011). They feel like progress. They correlate weakly with revenue at best.

“I’d use that” responses to a pitch. The moment you pitch, the conversation contaminates. Useful interviews never include a pitch in the first 25 minutes. The Mom Test exists to operationalize this.

Influencer endorsements. A well-known person saying nice things about your idea is helpful for distribution later, but it’s not validation. Influencers will support things they’d never personally pay for.

The signal threshold (when you’re “validated enough”)

Use this as a gate before writing any production code:

Four conditions, all required:

- 10+ buyer conversations with people who match your defined persona (not friends, not vague “potential users”).

- At least 7 of them described the pain in their own words, without you prompting or pitching first.

- At least 3 of them committed to pay. Pre-order, deposit, or signed LOI for B2B. At your proposed price point.

- You have a “why now” answer that doesn’t depend on a hypothetical future trend. The market timing has to be present-tense.

Below all four conditions: not validated. Don’t write production code yet. Stay in interview mode.

Above all four: write the MVP. The validation work is done; product execution is the next risk.

Common mistakes that ruin validation

1. Pitching too early. Founders mention their idea in the first 5 minutes. The interview is then useless because the buyer is now reacting to the idea, not describing their actual life. Hold the pitch until the last 5 minutes, and only pitch if you need a commitment.

2. Asking hypotheticals. “Would you use this?” predicts nothing about whether they would, in fact, use this. Replace every hypothetical with a past-tense behavioral question.

3. Mistaking interest for intent. “Sounds cool” is not intent. “Here’s $50 for the first version” is intent. Stop confusing the two.

4. Stopping at 3-5 conversations. Pattern emerges around 10-15. Anything less is anecdote. The brain wants to declare victory at 3 conversations because doing 12 is harder. Push through.

5. Building before defining the buyer. Validation that isn’t tied to a specific buyer persona is meaningless. “Founders” isn’t a buyer. “B2B SaaS founders running pre-seed startups in Europe who haven’t shipped yet” is. Get the buyer specific in stage 2 of the playbook before you start any validation work.

Where this fits in the 9-stage playbook

Idea validation isn’t one stage in the ShipFit 9-step flow. It’s the methodology that runs through stages 1-7. The flow is:

- Stage 1 (Worth Building?) — Market verdict. TAM/SAM/SOM, competitor landscape, and a “why now” trend check. First cut at whether the market exists at all.

- Stage 2 (Who Pays?) — Primary buyer. Proto-personas paired with willingness-to-pay and LTV/CAC estimates so the buyer is named with enough economic precision to drive every later stage.

- Stage 3 (What Hurts?) — Core problems, ranked. Mom Test discipline + Do-Say Gap analysis applied to surface the pain points by frequency × intensity. The output is your above-the-line problem list.

- Stage 4 (How to Win?) — Solution approach. 3 candidate solutions scored on problem-solution fit, each mapped to a moat via 7 Powers + Blue Ocean Strategy.

- Stage 5 (What’s V1?) — MVP scope. MoSCoW + Feasibility-Impact applied to feature candidates, producing three MVP packages (Lean / Balanced / Full) so the scope-vs-effort tradeoff is explicit before you commit.

- Stage 6 (How to Charge?) — Pricing model. Van Westendorp PSM produces a defensible price band; the Pricing Position chart places your candidate price against the competitor pack.

- Stage 7 (Will They Pay?) — Demand proof. Smoke test plan + pre-sales playbook (pre-orders, deposits, LOIs, Fake Door conversion) — the behavioral evidence that runs before the build commitment.

Each stage’s output feeds the next. Skipping a stage means the one after it runs on assumed inputs, which is how 9-stage playbooks turn into 18-month build cycles for products nobody buys.

What ShipFit does at this stage

ShipFit doesn’t run the interviews for you (no software can). What it does:

- Generates a buyer-specific question set for Stage 3 (What Hurts?) — Mom-Test-style questions phrased in past-tense behavior, tuned against the buyer profile you defined at Stage 2.

- Captures and synthesizes your interview takeaways alongside the framework lenses (Mom Test, JTBD), so the pain points feed into Stage 4’s solution approaches and Stage 5’s MVP scoring.

- Outputs a Smoke test plan and Pre-sales playbook at Stage 7 with the Gold/Silver/Bronze/Worthless signal tiers and behavioral validation criteria — the framework you run against your own data once buyers see it.

- Generates handoff artifacts at Stage 9 — Universal Prompt or tool-specific exports for Cursor, Claude Code, Windsurf, v0, Lovable, Replit, Gemini/Antigravity — so the validated decisions land in your dev environment instead of dying in a Notion doc.

If you’ve never run idea validation before, start your playbook free and walk through stages 1-7. The free tier covers stages 1-2; the $5 Taster Pack covers all the validation work.

The bottom line

Idea validation is unfun, slow, and ego-bruising. Building is fast, dopaminergic, and feels like progress. The founders who shipped products people bought spent more time on validation than building. The founders who shipped products nobody bought did the opposite.

The math is brutal but simple: 2-4 weeks of validation saves 6-12 months of building the wrong thing. Skip it and you join the 35-38% of failed startups that cite “no market need” as the cause. Run it and you find out, cheaply and early, whether the idea you’re committed to is one worth committing to.

Related frameworks

The Mom Test

The Mom Test is Rob Fitzpatrick's framework for customer interviews that generate real signal. Not praise. Three rules, applied step-by-step, with examples.

Jobs to be Done (JTBD)

Jobs to be Done reframes every product decision: customers don't buy features, they hire products to get a job done. Here's how to apply it without faking it.

The Lean Startup

Eric Ries's Lean Startup, stripped of consultant fluff. Validated learning, Build-Measure-Learn, MVP, pivot or persevere. What it means and where it gets misapplied.

Frequently asked questions

What is idea validation?

How do I validate a startup idea before building it?

How many customer interviews do I need to validate an idea?

What's the difference between idea validation and product validation?

Can I validate an idea using AI tools like ChatGPT?

What signal threshold means an idea is 'validated enough' to start building?

What if I do all the validation work and the answer is 'no'?

Keep exploring

The Mom Test is Rob Fitzpatrick's framework for customer interviews that generate real signal. Not praise. Three rules, applied step-by-step, with examples.

The Van Westendorp framework uses 4 questions to surface a defensible price range for any product. Here's how to run it, interpret results, and avoid the cheapest mistakes.

Most founder market research is a TAM slide that nobody believes. The numbers that actually matter are smaller, harder to defend, and tell you whether the market exists for the ten-customer version of your business.

Most early-stage competitive analysis is a 2x2 with your product in the top-right quadrant. The real version is harder, more boring, and tells you whether you can actually win.

Does each customer make you money? Or cost you money?

Run nine framework-backed decisions in order before writing code: define the buyer, prove the pain is painful, name the winning angle, scope V1 to the smallest test of the hypothesis, get behavioral evidence (paid pre-orders, signed letters of intent, or credit cards on file from a Fake Door Test), then ship. Most failed startups skipped at least three of those nine. Plan to spend two to four weeks on this. It saves six to nine months of building the wrong thing.

For indie hackers who've wasted months on dead ideas. ShipFit forces 9 decisions before you write a line of code. Proven frameworks, exports to Cursor.

If you want a conversation partner, Buildpad. If you want to stop researching and ship, ShipFit. Both solve different problems for different founders. Don't pick on hype.

The smallest version of a product that lets you test a falsifiable hypothesis about a buyer's behavior. Coined by Frank Robinson in 2001; popularized by Eric Ries in 'The Lean Startup' (2011). Not a stripped-down launch product. A learning tool.

Ready to make your next product a success?

9 decisions between your idea and a product worth building.