The launch-plan template most founders are missing

Most product launch plan templates online are activity lists: “post on Twitter, email your list, submit to Product Hunt, do an outreach campaign.” That isn’t a plan. That’s a checklist of activity dressed as strategy.

A real launch plan answers seven questions, in order. Each one gates the next. If you can’t answer Q1, the rest is wishful thinking.

Below is the template. Copy it, fill in the gaps, ship it. If you’d rather have it generated for you with framework checks at every step, run the 9-step ShipFit playbook; the launch plan is what it produces at stage 8.

The 7 decisions a launch plan locks down

1. Who is the launch FOR?

Not “founders.” Not “developers.” Not “small businesses.” A specific buyer with budget authority and a problem painful enough to act on launch day.

The right answer reads like: “B2B SaaS founders running pre-seed startups in Europe who’ve already raised but haven’t shipped.” That’s specific enough to map to a channel.

If you can’t write your buyer in one sentence at this level of specificity, you don’t have a launch problem. You have a Who Pays problem, and your launch will fail no matter how many channels you pick.

2. Where does that buyer already pay attention?

The honest version of go-to-market. Not “where do I want them to be,” but “where are they actually spending hours of their week before they’ve ever heard of me.”

Examples:

- B2B SaaS pre-seed founders → Twitter, Indie Hackers, specific Slack communities (e.g., On Deck), maybe a16z/YC newsletters

- Enterprise IT buyers → Gartner / Forrester reports, vendor-led webinars, AWS re:Invent and similar conferences

- Indie hackers / solo developers → Hacker News, Indie Hackers, dev.to, specific subreddits, Twitter (still)

- Healthcare practitioners → trade publications, conference exhibits, peer referrals, almost never social

If you genuinely don’t know where your buyer pays attention, you do not yet have validation. Go back to the Mom Test and add five more interviews where you ask “what newsletters or communities do you read for this kind of thing?“

3. Which 2 channels score highest by ICE?

Once you have a list of 5-10 plausible channels, run ICE scoring on each:

| Channel | Impact (1-10) | Confidence (1-10) | Ease (1-10) | ICE score |

|---|---|---|---|---|

| Twitter launch thread | 7 | 8 | 9 | 504 |

| Indie Hackers post | 6 | 9 | 8 | 432 |

| Outreach to YC alumni | 8 | 5 | 4 | 160 |

| Product Hunt launch | 8 | 6 | 6 | 288 |

| LinkedIn post | 4 | 5 | 8 | 160 |

Pick the top two. Ignore the rest. Three channels means each one gets one-third of your attention and produces one-third of the result. Two channels means you can actually be present in both for the first 48 hours, which is when launch traction is decided.

4. What is the launch message?

One sentence that does three jobs: names the problem, names the buyer, makes the buyer feel seen.

Wrong: “ShipFit is the AI-powered all-in-one decision engine for founders building products.”

Right: “If you’ve ever shipped a product nobody bought, this is the playbook you should have run before writing code.”

The right version names a specific failure pattern (shipped + nobody bought), implies a specific buyer (founders who’ve launched at least once), and offers a specific fix. The wrong version is an SEO description.

Test the message before launch day. Send it to 20 of your interview subjects and ask: “Would this make you click? Why or why not?” If under half say yes, the message is wrong, not the channel.

5. What does week 0 actually look like, by the hour?

Most launches fail on day 1 because nobody mapped the hour-by-hour. Here’s the actual template:

T-7 days (one week out)

- Final QA pass on the product

- Landing page copy locked (no more “should I change the headline?” debates)

- Pricing page final

- 3 onboarding flows tested with strangers, not friends

T-3 days

- Personal outreach list ready: 50-200 warm contacts who match the buyer profile and might be willing to share

- DMs / emails drafted, personalized hooks per contact (no “hey checking in” mass blasts)

- Backup support coverage scheduled (if launch goes well, you’ll need to answer 100+ messages in 24 hours)

T-1 day

- Communities (Slack, Discord, Reddit) where you’ve been active for at least 3 months: warm-up posts queued, not promotional yet

- Email list teaser sent (“something I’ve been working on, more tomorrow”)

Day 0 (launch day)

- 8am buyer-timezone: primary channel post (Twitter thread, Product Hunt submission, etc.)

- 11am: secondary channel post

- 1pm: email blast to your full list

- 3pm: reach out to first 20 warm contacts personally

- All day: respond to every comment within 1 hour; track which message variants get the most replies

Day +1

- Reach out to next 50 warm contacts

- Update the landing page based on day-0 confusion (“everyone asked X, add a sentence about it”)

- Post follow-up in primary channel (“first 24 hours: here’s what we learned”)

Day +3

- Honest post-launch retro: what worked, what didn’t, what’s the metric tracking

- Decide where to double down

Day +7

- Measure against the metric you set in Q6 below

- Decide: scale, pivot the message, or rethink the channel mix

6. What metric proves the launch worked?

Set this BEFORE launch. Not after.

Pick one of these, in increasing order of signal strength:

- Email signups (weak. Many people sign up for things they’d never pay for)

- Demos booked (medium. Friction is calendar time, which is real but limited)

- Free trials started with non-trivial actions (medium-strong. They did something inside the product)

- Paid conversions (strong. Money down)

- Revenue (strongest. Pre-orders, contracts, deposits)

For a pre-revenue product with a paid tier, “paid conversions in 14 days” is usually the right metric. Set a target number. “Below 30% of target, the launch had a positioning problem. 30-70%, the launch was OK but channel mix was wrong. 70%+, scale what worked.”

This step is where most launch plans cheat. They define success vaguely (“get the word out”) and then any outcome counts as a win, which is the same as no outcome counting. Pick a number. Live with it.

7. What’s the kill / pivot rule?

If launch underperforms, what do you do?

Three honest answers:

-

Kill the channel mix, not the product if the channels were wrong but the people who DID find it converted well. (E.g., Product Hunt launch flopped but 5/10 people from a Slack community converted to paid. The channel was wrong, the product is fine, double down on Slack.)

-

Kill the message, not the product if the channels were right but the conversion was weak. (E.g., right people saw it, but few clicked through to signup. The message wasn’t matching the pain.)

-

Kill the launch and go back to validation if the conversion was weak everywhere. The product or buyer is wrong. This is the hardest call to make and the one most founders avoid for 6-12 months too long.

Lock this rule before launch. After launch, you will be too emotionally invested to pick #3 without a pre-commitment.

The free template (copy this into Notion or a doc)

PRODUCT LAUNCH PLAN. [Product Name]

Launch Date: [YYYY-MM-DD]

1. BUYER (one sentence, specific):

_________________________________________________

2. CHANNELS (top 2 by ICE score, with scores):

Channel A: ____________________ ICE: ___

Channel B: ____________________ ICE: ___

3. MESSAGE (one sentence, names the problem + buyer):

_________________________________________________

4. WEEK SCHEDULE (T-7 through T+7. See template above)

5. SUCCESS METRIC + TARGET NUMBER:

Metric: ____________________

Target: ____________________

Floor (below this, kill): ____________________

6. KILL/PIVOT RULES (pre-commit before launch):

If channels wrong: ______________________________

If message wrong: _______________________________

If conversion weak everywhere: __________________

7. WARM CONTACT LIST (50-200 names, with hooks):

[Spreadsheet or table here]Print it. Post it on the wall. Cross things off as you do them.

The launch math nobody runs

Run this before launch day, not after.

Estimated launch-day reach × estimated conversion to signup × estimated signup-to-paid conversion = estimated paid customers from launch.

Realistic numbers for a pre-revenue B2B SaaS with a $19/mo tier:

- Launch reach (top-of-funnel impressions): 5,000-50,000 over 7 days for a well-targeted launch

- Signup conversion: 1-3% of impressions (so 50-1,500 signups)

- Signup-to-paid conversion: 2-10% in the first 30 days (so 1-150 paid customers)

If those numbers don’t add up to a launch you’re proud of, the gap isn’t a channel problem. It’s that your buyer pool is too small or your conversion rate at any stage is unrealistic. Better to know this before you spend two weeks on launch prep than after.

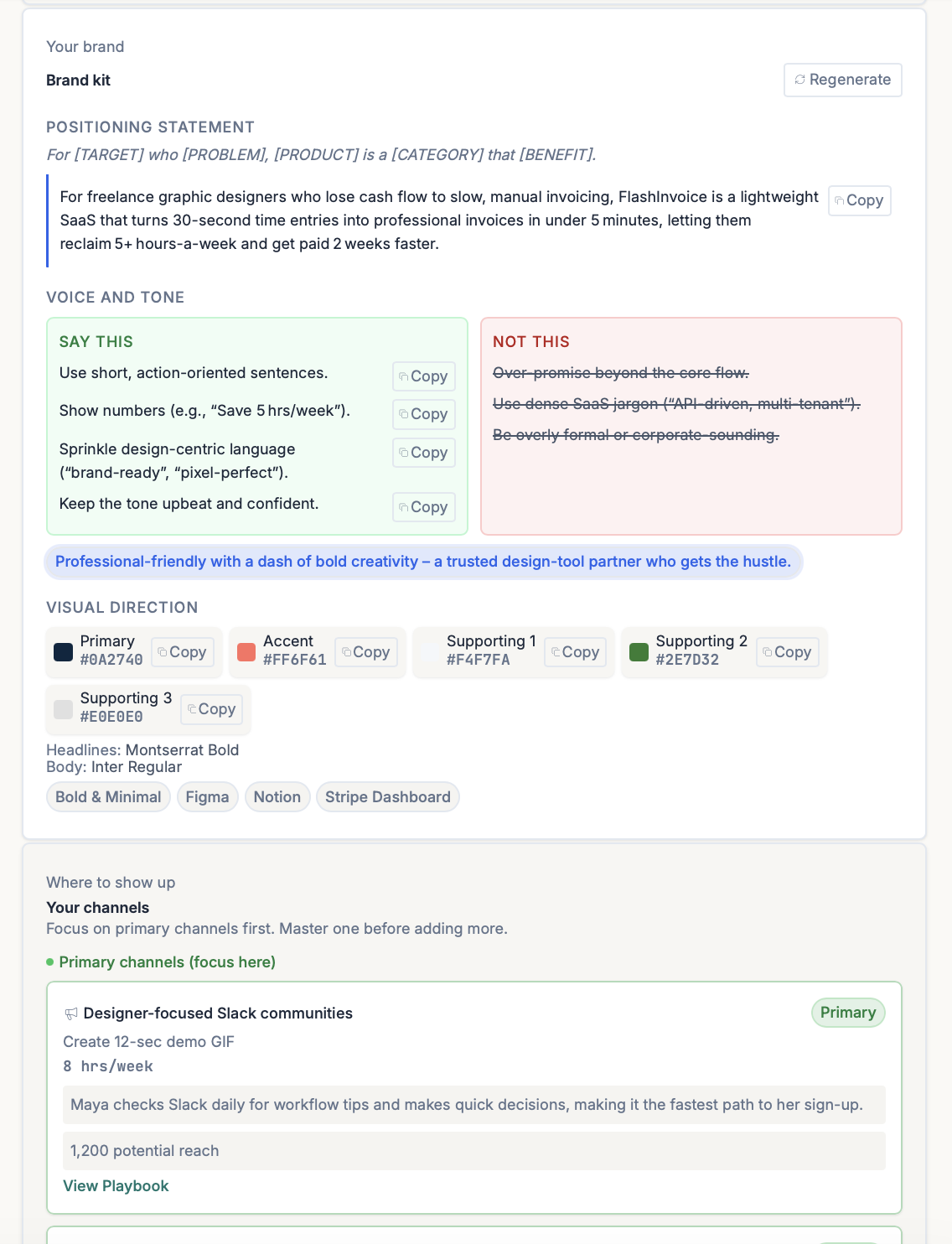

What ShipFit ships at the end of stage 8

If you run the full 9-step ShipFit playbook, stage 8 produces a populated version of the template above: your buyer is locked in by stage 2, your channels are scored by ICE in stage 8, your message draws from the JTBD job statement you wrote in stage 4, and the kill/pivot rules borrow logic from your pricing validation thresholds.

The Product Playbook fills in as you go

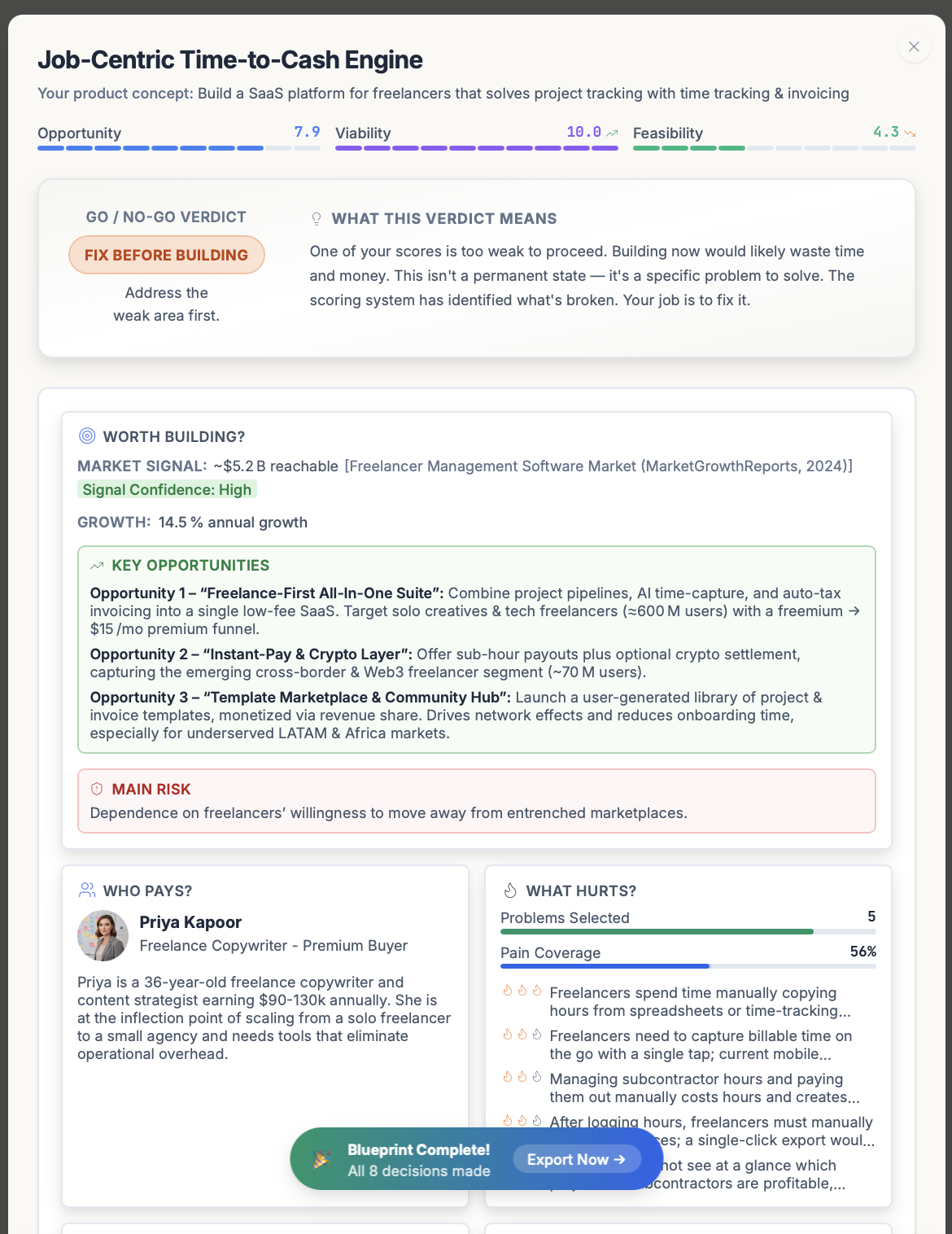

Every decision you make across the 9 stages writes back into a single living document: the Product Playbook. Each section unlocks as you complete the matching stage, so by the time you reach the launch decision the page already shows your verdict, your buyer, your above-the-line pains, your winning angle, your V1 scope, and your pricing position. Stage 8 then drops the launch slot in: pricing model, entry price, price position, positioning line, and primary channels.

Once all 9 decisions are made the playbook hits its Blueprint Complete state, with a single Export Now CTA that hands the playbook off to stage 9.

Then stage 9 (What to Export?) turns the launch plan into specs for the tools you actually build with. Either a Universal Prompt (one prompt, any AI chat: ChatGPT, Claude, Gemini), or tool-specific configurations: .cursorrules + domain rules for Cursor, CLAUDE.md + slash macros for Claude Code, .windsurfrules + pin guide for Windsurf, plus presets for v0.dev, Lovable, Replit, and Gemini/Antigravity. The same validated playbook becomes the spec your codebase ships from.

The power combo most founders pick: Cursor for editing + Claude Code for terminal. The exports are pre-configured for that pairing.

The kindest version of the truth

Most launches don’t fail because the founder picked the wrong channel. They fail because the founder skipped the buyer-definition step at the top of this template, then optimized their launch for whoever happened to show up. Whoever shows up usually isn’t a buyer. They’re traffic.

Lock the seven decisions before you ship. The launch will look smaller (two channels, one message, one metric) and convert dramatically better than the 30-channel “marketing plan” your friends will tell you to run.

Related frameworks

ICE Scoring

ICE Scoring multiplies Impact × Confidence × Ease to rank features and experiments. The honest version forces you to defend Confidence with evidence.

The Lean Startup

Eric Ries's Lean Startup, stripped of consultant fluff. Validated learning, Build-Measure-Learn, MVP, pivot or persevere. What it means and where it gets misapplied.

Jobs to be Done (JTBD)

Jobs to be Done reframes every product decision: customers don't buy features, they hire products to get a job done. Here's how to apply it without faking it.

Frequently asked questions

What is a product launch plan template?

How do I pick the right launch channels for my product?

What should be in a launch week checklist?

How do I know if my launch worked?

Should I launch on Product Hunt?

How long before launch should I start the launch plan?

What's the difference between a launch and a soft launch?

Keep exploring

ICE Scoring multiplies Impact × Confidence × Ease to rank features and experiments. The honest version forces you to defend Confidence with evidence.

The Mom Test is Rob Fitzpatrick's framework for customer interviews that generate real signal. Not praise. Three rules, applied step-by-step, with examples.

Default-prompted AI is a slop machine: agreeable, plausible-sounding, useless for validating an idea. Here's how to use AI for the parts where it actually adds signal, and where to keep it out of the way.

Most founder market research is a TAM slide that nobody believes. The numbers that actually matter are smaller, harder to defend, and tell you whether the market exists for the ten-customer version of your business.

Does each customer make you money? Or cost you money?

Run nine framework-backed decisions in order before writing code: define the buyer, prove the pain is painful, name the winning angle, scope V1 to the smallest test of the hypothesis, get behavioral evidence (paid pre-orders, signed letters of intent, or credit cards on file from a Fake Door Test), then ship. Most failed startups skipped at least three of those nine. Plan to spend two to four weeks on this. It saves six to nine months of building the wrong thing.

For indie hackers who've wasted months on dead ideas. ShipFit forces 9 decisions before you write a line of code. Proven frameworks, exports to Cursor.

If you want a conversation partner, Buildpad. If you want to stop researching and ship, ShipFit. Both solve different problems for different founders. Don't pick on hype.

The smallest version of a product that lets you test a falsifiable hypothesis about a buyer's behavior. Coined by Frank Robinson in 2001; popularized by Eric Ries in 'The Lean Startup' (2011). Not a stripped-down launch product. A learning tool.

Ready to make your next product a success?

9 decisions between your idea and a product worth building.